Фильтр Калмана

Фильтр Калмана это эффективный рекурсивный фильтр, который оценивает состояние линейной динамической системы по серии неточных измерений. Он используется в широком спектре задач от радаров до систем технического зрения, и является важной частью теории управления системами.

Содержание

- 1 Примеры решаемых фильтром задач

- 2 Используемая модель динамической системы

- 3 Фильтр Калмана

- 4 Examples

- 5 Derivations

- 6 Relationship to the digital filter

- 7 Relationship to recursive Bayesian estimation

- 8 Information filter

- 9 Fixed-lag smoother

- 10 Fixed-interval filters

- 11 Non-linear filters

- 12 Kalman–Bucy filter

Примеры решаемых фильтром задач

В качестве примера можно привести предоставление точной, поддерживаемой в актуальном состоянии, информации о положении и скорости объекта, при наличии серии измерений положения объекта, каждое из которых в некоторой степени неточно. Например, в радарах при отслеживании цели мы имеем очень зашумлённую (неточную) информацию о положении, скорости и ускорении наблюдаемого объекта. Фильтр Калмана использует известную нам математическую модель динамики объекта, которая описывает какие вообще изменения состояния объекта возможны, чтобы устранить погрешности измерения и предоставить хорошей точности положение объекта в данный момент (фильтрация), в будущие моменты (предсказание), или в какие-то из прошедших моментов (интерполяция или сглаживание).

В качестве альтернативного примера рассмотрим старый тихоходный автомобиль, про который точно известно, что он разгоняется от 0 до 100км/ч не менее чем за 10 секунд. Представим, что спидометр этого автомобиля барахлит и показывает скорость с погрешностью 60км/ч от настоящей. Из неподвижного положения, которое измерено точно, потому что колёса не вращались, водитель нажимает педаль газа в пол и через 5 секунд спидометр показывает 110км/ч, но водитель то знает, что машина не может так быстро разогнаться, поэтому он использует информацию о погрешности и понимает, что сейчас скорее всего, учитывая то, что он знает на сколько может врать спидометр, скорость около 50км/ч. Так же и фильтр Калмана использует информацию о погрешности измерений и о том, каким правилам подчиняется динамическая система, для минимизации погрешности измерений и предоставления максимально точной информации о состоянии системы.

Используемая модель динамической системы

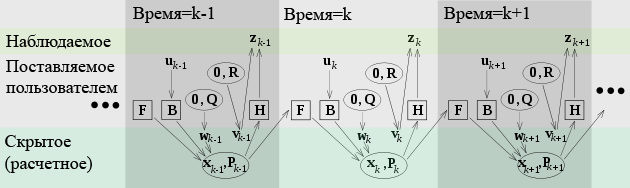

Фильтры Калмана основываются на линейных динамических системах, дискретизированных по времени. Они моделируются цепями Маркова, построенными на линейных операторах с внесенными погрешностями с нормальным Гауссовым распределением. Состояние системы считается вектор из действительных чисел. При каждом шаге по времени, линейный оператор применяется к вектору состояния системы, добавляется некоторая погрешность и опционально некоторая информация об управляющих воздействиях на систему, если таковая известна. После чего другим линейным оператором с другой погрешностью добавляется видимая информация о состоянии системы. Фильтр Калмана можно рассматривать в качестве аналога скрытым моделям Маркова, с тем ключевым отличием, что переменные, описывающие состояние системы, являются элементами бесконечного множества действительных чисел (в отличие от конечного множества пространства состояний в скрытых моделях Маркова). Кроме того, скрытые модели Маркова могут работать с произвольными распределениями для следующих значений переменных состояния системы, в отличие от модели стандартного Гауссового распределения, поддерживаемого фильтрами Калмана. Существует строгая взаимосвязь между уравнениями фильтра Калмана и аналогичными в скрытых моделях Маркова. Подробней эта тема, как и некоторые другие модели, рассмотрена авторами Roweis и Ghahramani (1999).[1]

Чтобы было возможным использовать фильтр Калмана для оценки внутреннего состояния системы по серии неточных измерений, необходимо представить модель данного процесса в соответствии с универсальной моделью процесс для фильтра Калмана. Это означает, что нужно указать матрицы Fk, Hk, Qk, Rk, и иногда Bk для каждого шага по времени k, как указано ниже.

Модель системы для фильтра Калмана подразумевает, что реальное состояние в момент времени k получается из состояния в момент времени (k − 1) по правилу:

<math> \textbf{x}_{k} = \textbf{F}_{k} \textbf{x}_{k-1} + \textbf{B}_{k} \textbf{u}_{k} + \textbf{w}_{k} </math>,

где

- Fk матрица соответствующая модели преобразованию системы со временем, применяемая к предыдущему состоянию xk−1;

- Bk матрица соответствующая модели применения управляющего воздействия, которая применяется к состоянию системы умноженная на вектор управляющего воздействия uk;

- wk вектор погрешности, которая предполагается, имеет нулевое матожидание, нормальное Гауссово распределение и матрицу ковариаций Qk:

<math>\textbf{w}_{k} \sim N(0, \textbf{Q}_k) </math>

В момент времени k производится наблюдение (или измерение) zk реального состояния системы xk в соответствии с моделью измерения

- <math>\textbf{z}_{k} = \textbf{H}_{k} \textbf{x}_{k} + \textbf{v}_{k}</math>

где Hk матрица, соответствующая модели наблюдения, которая отображает пространство векторов реального состояния системы в пространство векторов результатов наблюдений, а vk это вектор ошибки наблюдения, который считается имеющим нулевое матожидание, нормальное Гауссово распределение и матрицу ковариаций Rk:

<math>\textbf{v}_{k} \sim N(0, \textbf{R}_k) </math>

Вектор начального состояния системы и векторы погрешностей на каждом шаге {x0, w1, ..., wk, v1 ... vk} считаются не зависящими друг от друга.

Множество реальных динамических систем не полностью вписываются в эту модель, однако по причине того, что фильтр Калмана предназначен для работы в ситуации неточных данных, ответы, генерируемые этим фильтром, часто являются очень хорошим приближением к правильному ответу, что делает фильтр очень полезным даже в таких ситуациях. Вариации Калмановской фильтрации описанные ниже позволяют работать с более сложными моделями.

Фильтр Калмана

The Kalman filter is a recursive estimator. This means that only the estimated state from the previous time step and the current measurement are needed to compute the estimate for the current state. In contrast to batch estimation techniques, no history of observations and/or estimates is required. In what follows, the notation <math>\hat{\textbf{x}}_{n|m}</math> represents the estimate of <math>\textbf{x}</math> at time n given observations up to, and including time m.

The state of the filter is represented by two variables:

- <math>\hat{\textbf{x}}_{k|k}</math>, the estimate of the state at time k given observations up to and including time k;

- <math>\textbf{P}_{k|k}</math>, the error covariance matrix (a measure of the estimated accuracy of the state estimate).

The Kalman filter has two distinct phases: Predict and Update. The predict phase uses the state estimate from the previous timestep to produce an estimate of the state at the current timestep. In the update phase, measurement information at the current timestep is used to refine this prediction to arrive at a new, (hopefully) more accurate state estimate, again for the current timestep.

Predict

| Predicted state |

<math>\hat{\textbf{x}}_{k|k-1} = \textbf{F}_{k}\hat{\textbf{x}}_{k-1|k-1} + \textbf{B}_{k-1} \textbf{u}_{k-1} </math> |

|

Predicted estimate covariance |

<math>\textbf{P}_{k|k-1} = \textbf{F}_{k} \textbf{P}_{k-1|k-1} \textbf{F}_{k}^{\text{T}} + \textbf{Q}_{k-1} </math> |

Update

| Innovation or measurement residual |

<math> \tilde{\textbf{y}}_k = \textbf{z}_k - \textbf{H}_k\hat{\textbf{x}}_{k|k-1} </math> |

| Innovation (or residual) covariance | <math>\textbf{S}_k = \textbf{H}_k \textbf{P}_{k|k-1} \textbf{H}_k^\text{T} + \textbf{R}_k </math> |

| Optimal Kalman gain | <math>\textbf{K}_k = \textbf{P}_{k|k-1}\textbf{H}_k^\text{T}\textbf{S}_k^{-1}</math> |

| Updated state estimate | <math>\hat{\textbf{x}}_{k|k} = \hat{\textbf{x}}^{-}_{k|k-1} + \textbf{K}_k\tilde{\textbf{y}}_k</math> |

| Updated estimate covariance | <math>\textbf{P}_{k|k} = (I - \textbf{K}_k \textbf{H}_k) \textbf{P}_{k|k-1}</math> |

The formula for the updated estimate covariance above is only valid for the optimal Kalman gain. Usage of other gain values require a more complex formula found in the derivations section.

Invariants

If the model is accurate, and the values for <math>\hat{\textbf{x}}_{0|0}</math> and <math>\textbf{P}_{0|0}</math> accurately reflect the distribution of the initial state values, then the following invariants are preserved: all estimates have mean error zero

- <math>\textrm{E}[\textbf{x}_k - \hat{\textbf{x}}_{k|k}] = \textrm{E}[\textbf{x}_k - \hat{\textbf{x}}_{k|k-1}] = 0</math>

- <math>\textrm{E}[\tilde{\textbf{y}}_k] = 0</math>

where <math>\textrm{E}[\xi]</math> is the expected value of <math>\xi</math>, and covariance matrices accurately reflect the covariance of estimates

- <math>\textbf{P}_{k|k} = \textrm{cov}(\textbf{x}_k - \hat{\textbf{x}}_{k|k})</math>

- <math>\textbf{P}_{k|k-1} = \textrm{cov}(\textbf{x}_k - \hat{\textbf{x}}_{k|k-1})</math>

- <math>\textbf{S}_{k} = \textrm{cov}(\tilde{\textbf{y}}_k)</math>

Examples

Consider a truck on perfectly frictionless, infinitely long straight rails. Initially the truck is stationary at position 0, but it is buffeted this way and that by random acceleration. We measure the position of the truck every Δt seconds, but these measurements are imprecise; we want to maintain a model of where the truck is and what its velocity is. We show here how we derive the model from which we create our Kalman filter.

There are no controls on the truck, so we ignore Bk and uk. Since F, H, R and Q are constant, their time indices are dropped.

The position and velocity of the truck is described by the linear state space

- <math>\textbf{x}_{k} = \begin{bmatrix} x \\ \dot{x} \end{bmatrix} </math>

where <math>\dot{x}</math> is the velocity, that is, the derivative of position with respect to time.

We assume that between the (k − 1)th and kth timestep the truck undergoes a constant acceleration of ak that is normally distributed, with mean 0 and standard deviation σa. From Newton's laws of motion we conclude that

- <math>\textbf{x}_{k} = \textbf{F} \textbf{x}_{k-1} + \textbf{G}a_{k}</math>

where

- <math>\textbf{F} = \begin{bmatrix} 1 & \Delta t \\ 0 & 1 \end{bmatrix}</math>

and

- <math>\textbf{G} = \begin{bmatrix} \frac{\Delta t^{2}}{2} \\ \Delta t \end{bmatrix} </math>

We find that

- <math> \textbf{Q} = \textrm{cov}(\textbf{G}a) = \textrm{E}[(\textbf{G}a)(\textbf{G}a)^{\text{T}}] = \textbf{G} \textrm{E}[a^2] \textbf{G}^{\text{T}} = \textbf{G}[\sigma_a^2]\textbf{G}^{\text{T}} = \sigma_a^2 \textbf{G}\textbf{G}^{\text{T}}</math> (since σa is a scalar).

At each time step, a noisy measurement of the true position of the truck is made. Let us suppose the noise is also normally distributed, with mean 0 and standard deviation σz.

- <math>\textbf{z}_{k} = \textbf{H x}_{k} + \textbf{v}_{k}</math>

where

- <math>\textbf{H} = \begin{bmatrix} 1 & 0 \end{bmatrix} </math>

and

- <math>\textbf{R} = \textrm{E}[\textbf{v}_k \textbf{v}_k^{\text{T}}] = \begin{bmatrix} \sigma_z^2 \end{bmatrix} </math>

We know the initial starting state of the truck with perfect precision, so we initialize

- <math>\hat{\textbf{x}}_{0|0} = \begin{bmatrix} 0 \\ 0 \end{bmatrix} </math>

and to tell the filter that we know the exact position, we give it a zero covariance matrix:

- <math>\textbf{P}_{0|0} = \begin{bmatrix} 0 & 0 \\ 0 & 0 \end{bmatrix} </math>

If the initial position and velocity are not known perfectly the covariance matrix should be initialized with a suitably large number, say B, on its diagonal.

- <math>\textbf{P}_{0|0} = \begin{bmatrix} B & 0 \\ 0 & B \end{bmatrix} </math>

The filter will then prefer the information from the first measurements over the information already in the model.

Derivations

Deriving the posterior estimate covariance matrix

Starting with our invariant on the error covariance Pk|k as above

- <math>\textbf{P}_{k|k} = \textrm{cov}(\textbf{x}_{k} - \hat{\textbf{x}}_{k|k})</math>

substitute in the definition of <math>\hat{\textbf{x}}_{k|k}</math>

- <math>\textbf{P}_{k|k} = \textrm{cov}(\textbf{x}_{k} - (\hat{\textbf{x}}_{k|k-1} + \textbf{K}_k\tilde{\textbf{y}}_{k}))</math>

and substitute <math>\tilde{\textbf{y}}_k</math>

- <math>\textbf{P}_{k|k} = \textrm{cov}(\textbf{x}_{k} - (\hat{\textbf{x}}_{k|k-1} + \textbf{K}_k(\textbf{z}_k - \textbf{H}_k\hat{\textbf{x}}_{k|k-1})))</math>

and <math>\textbf{z}_{k}</math>

- <math>\textbf{P}_{k|k} = \textrm{cov}(\textbf{x}_{k} - (\hat{\textbf{x}}_{k|k-1} + \textbf{K}_k(\textbf{H}_k\textbf{x}_k + \textbf{v}_k - \textbf{H}_k\hat{\textbf{x}}_{k|k-1})))</math>

and by collecting the error vectors we get

- <math>\textbf{P}_{k|k} = \textrm{cov}((I - \textbf{K}_k \textbf{H}_{k})(\textbf{x}_k - \hat{\textbf{x}}_{k|k-1}) - \textbf{K}_k \textbf{v}_k )</math>

Since the measurement error vk is uncorrelated with the other terms, this becomes

- <math>\textbf{P}_{k|k} = \textrm{cov}((I - \textbf{K}_k \textbf{H}_{k})(\textbf{x}_k - \hat{\textbf{x}}_{k|k-1})) + \textrm{cov}(\textbf{K}_k \textbf{v}_k )</math>

by the properties of vector covariance this becomes

- <math>\textbf{P}_{k|k} = (I - \textbf{K}_k \textbf{H}_{k})\textrm{cov}(\textbf{x}_k - \hat{\textbf{x}}_{k|k-1})(I - \textbf{K}_k \textbf{H}_{k})^{\text{T}} + \textbf{K}_k\textrm{cov}(\textbf{v}_k )\textbf{K}_k^{\text{T}}</math>

which, using our invariant on Pk|k-1 and the definition of Rk becomes

- <math>\textbf{P}_{k|k} =

(I - \textbf{K}_k \textbf{H}_{k}) \textbf{P}_{k|k-1} (I - \textbf{K}_k \textbf{H}_{k})^\text{T} + \textbf{K}_k \textbf{R}_k \textbf{K}_k^\text{T} </math> This formula (sometimes known as the "Joseph form" of the covariance update equation) is valid no matter what the value of Kk. It turns out that if Kk is the optimal Kalman gain, this can be simplified further as shown below.

Kalman gain derivation

The Kalman filter is a minimum mean-square error estimator. The error in the posterior state estimation is

- <math>\textbf{x}_{k} - \hat{\textbf{x}}_{k|k}</math>

We seek to minimize the expected value of the square of the magnitude of this vector, <math>\textrm{E}[|\textbf{x}_{k} - \hat{\textbf{x}}_{k|k}|^2]</math>. This is equivalent to minimizing the trace of the posterior estimate covariance matrix <math> \textbf{P}_{k|k} </math>. By expanding out the terms in the equation above and collecting, we get:

k} </math> k-1} - \textbf{K}_k \textbf{H}_k \textbf{P}_{k|k-1} - \textbf{P}_{k|k-1} \textbf{H}_k^\text{T} \textbf{K}_k^\text{T} + \textbf{K}_k (\textbf{H}_k \textbf{P}_{k|k-1} \textbf{H}_k^\text{T} + \textbf{R}_k) \textbf{K}_k^\text{T}</math> k-1} - \textbf{K}_k \textbf{H}_k \textbf{P}_{k|k-1} - \textbf{P}_{k|k-1} \textbf{H}_k^\text{T} \textbf{K}_k^\text{T} + \textbf{K}_k \textbf{S}_k\textbf{K}_k^\text{T}</math>

The trace is minimized when the matrix derivative is zero:

- <math>\frac{\partial \; \textrm{tr}(\textbf{P}_{k|k})}{\partial \;\textbf{K}_k} = -2 (\textbf{H}_k \textbf{P}_{k|k-1})^\text{T} + 2 \textbf{K}_k \textbf{S}_k = 0</math>

Solving this for Kk yields the Kalman gain:

- <math>\textbf{K}_k \textbf{S}_k = (\textbf{H}_k \textbf{P}_{k|k-1})^\text{T} = \textbf{P}_{k|k-1} \textbf{H}_k^\text{T}</math>

- <math> \textbf{K}_{k} = \textbf{P}_{k|k-1} \textbf{H}_k^\text{T} \textbf{S}_k^{-1}</math>

This gain, which is known as the optimal Kalman gain, is the one that yields MMSE estimates when used.

Simplification of the posterior error covariance formula

The formula used to calculate the posterior error covariance can be simplified when the Kalman gain equals the optimal value derived above. Multiplying both sides of our Kalman gain formula on the right by SkKkT, it follows that

- <math>\textbf{K}_k \textbf{S}_k \textbf{K}_k^T = \textbf{P}_{k|k-1} \textbf{H}_k^T \textbf{K}_k^T</math>

Referring back to our expanded formula for the posterior error covariance,

- <math> \textbf{P}_{k|k} = \textbf{P}_{k|k-1} - \textbf{K}_k \textbf{H}_k \textbf{P}_{k|k-1} - \textbf{P}_{k|k-1} \textbf{H}_k^T \textbf{K}_k^T + \textbf{K}_k \textbf{S}_k \textbf{K}_k^T</math>

we find the last two terms cancel out, giving

- <math> \textbf{P}_{k|k} = \textbf{P}_{k|k-1} - \textbf{K}_k \textbf{H}_k \textbf{P}_{k|k-1} = (I - \textbf{K}_{k} \textbf{H}_{k}) \textbf{P}_{k|k-1}.</math>

This formula is computationally cheaper and thus nearly always used in practice, but is only correct for the optimal gain. If arithmetic precision is unusually low causing problems with numerical stability, or if a non-optimal Kalman gain is deliberately used, this simplification cannot be applied; the posterior error covariance formula as derived above must be used.

Relationship to the digital filter

The Kalman filter can be regarded as an adaptive low-pass infinite impulse response digital filter, with cut-off frequency depending on the ratio between the process- and measurement (or observation) noise, as well as the estimate covariance. Frequency response is, however, rarely of interest when designing state estimators such as the Kalman Filter, whereas for digital filters such as IIR and FIR filters, frequency response is usually of primary concern. For the Kalman Filter, the important goal is how accurate the filter is, and this is most often decided based on empirical Monte Carlo simulations, where "truth" (the true state) is known.

Relationship to recursive Bayesian estimation

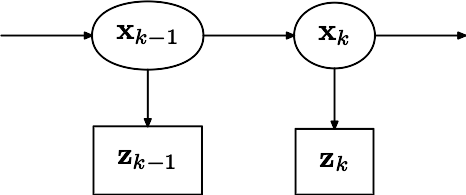

The true state is assumed to be an unobserved Markov process, and the measurements are the observed states of a hidden Markov model.

Because of the Markov assumption, the true state is conditionally independent of all earlier states given the immediately previous state.

- <math>p(\textbf{x}_k|\textbf{x}_0,\dots,\textbf{x}_{k-1}) = p(\textbf{x}_k|\textbf{x}_{k-1})</math>

Similarly the measurement at the k-th timestep is dependent only upon the current state and is conditionally independent of all other states given the current state.

- <math>p(\textbf{z}_k|\textbf{x}_0,\dots,\textbf{x}_{k}) = p(\textbf{z}_k|\textbf{x}_{k} )</math>

Using these assumptions the probability distribution over all states of the hidden Markov model can be written simply as:

- <math>p(\textbf{x}_0,\dots,\textbf{x}_k,\textbf{z}_1,\dots,\textbf{z}_k) = p(\textbf{x}_0)\prod_{i=1}^k p(\textbf{z}_i|\textbf{x}_i)p(\textbf{x}_i|\textbf{x}_{i-1})</math>

However, when the Kalman filter is used to estimate the state x, the probability distribution of interest is that associated with the current states conditioned on the measurements up to the current timestep. (This is achieved by marginalizing out the previous states and dividing by the probability of the measurement set.)

This leads to the predict and update steps of the Kalman filter written probabilistically. The probability distribution associated with the predicted state is the sum (integral) of the products of the probability distribution associated with the transition from the (k - 1)-th timestep to the k-th and the probability distribution associated with the previous state, over all possible <math>x_{k_-1}</math>.

- <math> p(\textbf{x}_k|\textbf{Z}_{k-1}) = \int p(\textbf{x}_k | \textbf{x}_{k-1}) p(\textbf{x}_{k-1} | \textbf{Z}_{k-1} ) \, d\textbf{x}_{k-1} </math>

The measurement set up to time t is

- <math> \textbf{Z}_{t} = \left \{ \textbf{z}_{1},\dots,\textbf{z}_{t} \right \} </math>

The probability distribution of the update is proportional to the product of the measurement likelihood and the predicted state.

- <math> p(\textbf{x}_k|\textbf{Z}_{k}) = \frac{p(\textbf{z}_k|\textbf{x}_k) p(\textbf{x}_k|\textbf{Z}_{k-1})}{p(\textbf{z}_k|\textbf{Z}_{k-1})} </math>

The denominator

- <math>p(\textbf{z}_k|\textbf{Z}_{k-1}) = \int p(\textbf{z}_k|\textbf{x}_k) p(\textbf{x}_k|\textbf{Z}_{k-1}) d\textbf{x}_k</math>

is a normalization term.

The remaining probability density functions are

- <math> p(\textbf{x}_k | \textbf{x}_{k-1}) = N(\textbf{F}_k\textbf{x}_{k-1}, \textbf{Q}_k)</math>

- <math> p(\textbf{z}_k|\textbf{x}_k) = N(\textbf{H}_{k}\textbf{x}_k, \textbf{R}_k) </math>

- <math> p(\textbf{x}_{k-1}|\textbf{Z}_{k-1}) = N(\hat{\textbf{x}}_{k-1},\textbf{P}_{k-1} )</math>

Note that the PDF at the previous timestep is inductively assumed to be the estimated state and covariance. This is justified because, as an optimal estimator, the Kalman filter makes best use of the measurements, therefore the PDF for <math>\mathbf{x}_k</math> given the measurements <math>\mathbf{Z}_k</math> is the Kalman filter estimate.

Information filter

In the information filter, or inverse covariance filter, the estimated covariance and estimated state are replaced by the information matrix and information vector respectively. These are defined as:

- <math>\textbf{Y}_{k|k} = \textbf{P}_{k|k}^{-1} </math>

- <math>\hat{\textbf{y}}_{k|k} = \textbf{P}_{k|k}^{-1}\hat{\textbf{x}}_{k|k} </math>

Similarly the predicted covariance and state have equivalent information forms, defined as:

- <math>\textbf{Y}_{k|k-1} = \textbf{P}_{k|k-1}^{-1} </math>

- <math>\hat{\textbf{y}}_{k|k-1} = \textbf{P}_{k|k-1}^{-1}\hat{\textbf{x}}_{k|k-1} </math>

as have the measurement covariance and measurement vector, which are defined as:

- <math>\textbf{I}_{k} = \textbf{H}_{k}^{\text{T}} \textbf{R}_{k}^{-1} \textbf{H}_{k} </math>

- <math>\textbf{i}_{k} = \textbf{H}_{k}^{\text{T}} \textbf{R}_{k}^{-1} \textbf{z}_{k} </math>

The information update now becomes a trivial sum.

- <math>\textbf{Y}_{k|k} = \textbf{Y}_{k|k-1} + \textbf{I}_{k}</math>

- <math>\hat{\textbf{y}}_{k|k} = \hat{\textbf{y}}_{k|k-1} + \textbf{i}_{k}</math>

The main advantage of the information filter is that N measurements can be filtered at each timestep simply by summing their information matrices and vectors.

- <math>\textbf{Y}_{k|k} = \textbf{Y}_{k|k-1} + \sum_{j=1}^N \textbf{I}_{k,j}</math>

- <math>\hat{\textbf{y}}_{k|k} = \hat{\textbf{y}}_{k|k-1} + \sum_{j=1}^N \textbf{i}_{k,j}</math>

To predict the information filter the information matrix and vector can be converted back to their state space equivalents, or alternatively the information space prediction can be used.

- <math>\textbf{M}_{k} =

[\textbf{F}_{k}^{-1}]^{\text{T}} \textbf{Y}_{k-1|k-1} \textbf{F}_{k}^{-1} </math>

- <math>\textbf{C}_{k} =

\textbf{M}_{k} [\textbf{M}_{k}+\textbf{Q}_{k}^{-1}]^{-1}</math>

- <math>\textbf{L}_{k} =

I - \textbf{C}_{k} </math>

- <math>\textbf{Y}_{k|k-1} =

\textbf{L}_{k} \textbf{M}_{k} \textbf{L}_{k}^{\text{T}} +

\textbf{C}_{k} \textbf{Q}_{k}^{-1} \textbf{C}_{k}^{\text{T}}</math>

- <math>\hat{\textbf{y}}_{k|k-1} =

\textbf{L}_{k} [\textbf{F}_{k}^{-1}]^{\text{T}}\hat{\textbf{y}}_{k-1|k-1} </math>

Note that if F and Q are time invariant these values can be cached. Note also that F and Q need to be invertible.

Fixed-lag smoother

The optimal fixed-lag smoother provides the optimal estimate of <math>\hat{\textbf{x}}_{k - N | k}</math> for a given fixed-lag <math>N</math> using the measurements from <math>\textbf{z}_{1}</math> to <math>\textbf{z}_{k}</math>. It can be derived using the previous theory via an augmented state, and the main equation of the filter is the following:

<math> \begin{bmatrix} \hat{\textbf{x}}_{t|t} \\ \hat{\textbf{x}}_{t-1|t} \\ \vdots \\ \hat{\textbf{x}}_{t-N+1|t} \\ \end{bmatrix} = \begin{bmatrix} I \\ 0 \\ \vdots \\ 0 \\ \end{bmatrix} \hat{\textbf{x}}_{t|t-1} + \begin{bmatrix} 0 & \ldots & 0 \\ I & 0 & \vdots \\ \vdots & \ddots & \vdots \\ 0 & \ldots & I \\ \end{bmatrix} \begin{bmatrix} \hat{\textbf{x}}_{t-1|t-1} \\ \hat{\textbf{x}}_{t-2|t-1} \\ \vdots \\ \hat{\textbf{x}}_{t-N|t-1} \\ \end{bmatrix} + \begin{bmatrix} K^{(1)} \\ K^{(2)} \\ \vdots \\ K^{(N)} \\ \end{bmatrix} y_{t|t-1} </math>

where:

1) <math> \hat{\textbf{x}}_{t|t-1} </math> is estimated via a standard Kalman filter;

2) <math> y_{t|t-1} = z(t) - \hat{\textbf{x}}_{t|t-1} </math> is the innovation produced considering the estimate of the standard Kalman filter;

3) the various <math> \hat{\textbf{x}}_{t-i|t} </math> with <math> i = 0,\ldots,N </math> are new variables, i.e. they do not appear in the standard Kalman filter;

4) the gains are computed via the following scheme:

<math> K^{(i)} = P^{(i)} H^{T} \left[ H P H^{T} + R \right]^{-1} </math>

and

<math> P^{(i)} = P \left[ \left[ F - K H \right]^{T} \right]^{i} </math>

where <math> P </math> and <math> K </math> are the prediction error covariance and the gains of the standard Kalman filter.

Note that if we define the estimation error covariance

<math> P_{i} := E \left[ \left( \textbf{x}_{t-i} - \hat{\textbf{x}}_{t-i|t} \right)^{*} \left( \textbf{x}_{t-i} - \hat{\textbf{x}}_{t-i|t} \right) | z_{1} \ldots z_{t} \right] </math>

then we have that the improvement on the estimation of <math> \textbf{x}_{t-i} </math> is given by:

<math> P - P_{i} = \sum_{j = 0}^{i} \left[ P^{(j)} H^{T} \left[ H P H^{T} + R \right]^{-1} H \left( P^{(i)} \right)^{T} \right] </math>

Fixed-interval filters

The optimal fixed-interval smoother provides the optimal estimate of <math>\hat{\textbf{x}}_{k | n}</math> (<math>k \leq n</math>) using the measurements from a fixed interval <math>\textbf{z}_{1}</math> to <math>\textbf{z}_{n}</math>. This is also called Kalman Smoothing.

There exists an efficient two-pass algorithm, Rauch-Tung-Striebel Algorithm, for achieving this. The main equations of the smoother is the following (assuming <math>\textbf{B}_{k} = \textbf{0}</math>):

- forward pass: regular Kalman filter algorithm

- backward pass: <math> \hat{\textbf{x}}_{k|n} = \tilde{F}_k \hat{\textbf{x}}_{k+1|n} + \tilde{K}_k \hat{\textbf{x}}_{k+1|k} </math>, where

- <math> \tilde{\textbf{F}}_k = \textbf{F}_k^{-1} (\textbf{I} - \textbf{Q}_k \textbf{P}_{k+1|k}^{-1}) </math>

- <math> \tilde{\textbf{K}}_k = \textbf{F}_k^{-1} \textbf{Q}_k \textbf{P}_{k+1|k}^{-1} </math>

Non-linear filters

The basic Kalman filter is limited to a linear assumption. However, most non-trivial systems are non-linear. The non-linearity can be associated either with the process model or with the observation model or with both.

Extended Kalman filter

Шаблон:Main In the extended Kalman filter, (EKF) the state transition and observation models need not be linear functions of the state but may instead be (differentiable) functions.

- <math>\textbf{x}_{k} = f(\textbf{x}_{k-1}, \textbf{u}_{k}) + \textbf{w}_{k}</math>

- <math>\textbf{z}_{k} = h(\textbf{x}_{k}) + \textbf{v}_{k}</math>

The function f can be used to compute the predicted state from the previous estimate and similarly the function h can be used to compute the predicted measurement from the predicted state. However, f and h cannot be applied to the covariance directly. Instead a matrix of partial derivatives (the Jacobian) is computed.

At each timestep the Jacobian is evaluated with current predicted states. These matrices can be used in the Kalman filter equations. This process essentially linearizes the non-linear function around the current estimate.

Unscented Kalman filter

When the state transition and observation models – that is, the predict and update functions <math>f</math> and <math>h</math> (see above) – are highly non-linear, the extended Kalman filter can give particularly poor performance.[2] This is because the mean and covariance are propagated through linearization of the underlying non-linear model. The unscented Kalman filter (UKF) [2] uses a deterministic sampling technique known as the unscented transform to pick a minimal set of sample points (called sigma points) around the mean. These sigma points are then propagated through the non-linear functions, from which the mean and covariance of the estimate are then recovered. The result is a filter which more accurately captures the true mean and covariance. (This can be verified using Monte Carlo sampling or through a Taylor series expansion of the posterior statistics.) In addition, this technique removes the requirement to explicitly calculate Jacobians, which for complex functions can be a difficult task in itself (i.e., requiring complicated derivatives if done analytically or being computationally costly if done numerically).

Predict

As with the EKF, the UKF prediction can be used independently from the UKF update, in combination with a linear (or indeed EKF) update, or vice versa.

The estimated state and covariance are augmented with the mean and covariance of the process noise.

- <math> \textbf{x}_{k-1|k-1}^{a} = [ \hat{\textbf{x}}_{k-1|k-1}^{T} \quad E[\textbf{w}_{k}^{T}] \ ]^{T} </math>

- <math> \textbf{P}_{k-1|k-1}^{a} = \begin{bmatrix} & \textbf{P}_{k-1|k-1} & & 0 & \\ & 0 & &\textbf{Q}_{k} & \end{bmatrix} </math>

A set of 2L+1 sigma points is derived from the augmented state and covariance where L is the dimension of the augmented state.

k-1}^{0} </math> k-1}^{a} </math> k-1}^{i} </math> k-1}^{a} + \left ( \sqrt{ (L + \lambda) \textbf{P}_{k-1|k-1}^{a} } \right )_{i}</math> <math>i = 1..L \,\!</math> k-1}^{i} </math> k-1}^{a} - \left ( \sqrt{ (L + \lambda) \textbf{P}_{k-1|k-1}^{a} } \right )_{i-L}</math> <math>i = L+1,\dots{}2L \,\!</math>

where

k-1}^{a} } \right )_{i}</math>

is the ith column of the matrix square root of

k-1}^{a}</math>

using the definition: square root A of matrix B satisfies

<math>B \equiv A A^T</math>.

The matrix square root should be calculated using numerically efficient and stable methods such as the Cholesky decomposition.

The sigma points are propagated through the transition function f.

- <math>\chi_{k|k-1}^{i} = f(\chi_{k-1|k-1}^{i}) \quad i = 0..2L </math>

The weighted sigma points are recombined to produce the predicted state and covariance.

- <math>\hat{\textbf{x}}_{k|k-1} = \sum_{i=0}^{2L} W_{s}^{i} \chi_{k|k-1}^{i} </math>

- <math>\textbf{P}_{k|k-1} = \sum_{i=0}^{2L} W_{c}^{i}\ [\chi_{k|k-1}^{i} - \hat{\textbf{x}}_{k|k-1}] [\chi_{k|k-1}^{i} - \hat{\textbf{x}}_{k|k-1}]^{T} </math>

where the weights for the state and covariance are given by:

- <math>W_{s}^{0} = \frac{\lambda}{L+\lambda}</math>

- <math>W_{c}^{0} = \frac{\lambda}{L+\lambda} + (1 - \alpha^2 + \beta)</math>

- <math>W_{s}^{i} = W_{c}^{i} = \frac{1}{2(L+\lambda)}</math>

- <math>\lambda = \alpha^2 (L+\kappa) - L \,\! </math>

Typical values for <math>\alpha</math>, <math>\beta</math>, and <math>\kappa</math> are <math>10^{-3}</math>, 2 and 0 respectively. (These values should suffice for most purposes.)Шаблон:Fact

Update

The predicted state and covariance are augmented as before, except now with the mean and covariance of the measurement noise.

- <math> \textbf{x}_{k|k-1}^{a} = [ \hat{\textbf{x}}_{k|k-1}^{T} \quad E[\textbf{v}_{k}^{T}] \ ]^{T} </math>

- <math> \textbf{P}_{k|k-1}^{a} = \begin{bmatrix} & \textbf{P}_{k|k-1} & & 0 & \\ & 0 & &\textbf{R}_{k} & \end{bmatrix} </math>

As before, a set of 2L + 1 sigma points is derived from the augmented state and covariance where L is the dimension of the augmented state.

k-1}^{0} </math> k-1}^{a} </math> k-1}^{i} </math> k-1}^{a} + \left ( \sqrt{ (L + \lambda) \textbf{P}_{k|k-1}^{a} } \right )_{i}</math> <math>i = 1..L \,\!</math> k-1}^{i} </math> k-1}^{a} - \left ( \sqrt{ (L + \lambda) \textbf{P}_{k|k-1}^{a} } \right )_{i-L}</math> <math>i = L+1,\dots{}2L \,\!</math>

Alternatively if the UKF prediction has been used the sigma points themselves can be augmented along the following lines

- <math> \chi_{k|k-1} := [ \chi_{k|k-1}^T \quad E[\textbf{v}_{k}^{T}] \ ]^{T} \pm \sqrt{ (L + \lambda) \textbf{R}_{k}^{a} }</math>

where

- <math> \textbf{R}_{k}^{a} = \begin{bmatrix} & 0 & & 0 & \\ & 0 & &\textbf{R}_{k} & \end{bmatrix} </math>

The sigma points are projected through the observation function h.

- <math>\gamma_{k}^{i} = h(\chi_{k|k-1}^{i}) \quad i = 0..2L </math>

The weighted sigma points are recombined to produce the predicted measurement and predicted measurement covariance.

- <math>\hat{\textbf{z}}_{k} = \sum_{i=0}^{2L} W_{s}^{i} \gamma_{k}^{i} </math>

- <math>\textbf{P}_{z_{k}z_{k}} = \sum_{i=0}^{2L} W_{c}^{i}\ [\gamma_{k}^{i} - \hat{\textbf{z}}_{k}] [\gamma_{k}^{i} - \hat{\textbf{z}}_{k}]^{T} </math>

The state-measurement cross-covariance matrix,

- <math>\textbf{P}_{x_{k}z_{k}} = \sum_{i=0}^{2L} W_{c}^{i}\ [\chi_{k|k-1}^{i} - \hat{\textbf{x}}_{k|k-1}] [\gamma_{k}^{i} - \hat{\textbf{z}}_{k}]^{T} </math>

is used to compute the UKF Kalman gain.

- <math>K_{k} = \textbf{P}_{x_{k}z_{k}} \textbf{P}_{z_{k}z_{k}}^{-1}</math>

As with the Kalman filter, the updated state is the predicted state plus the innovation weighted by the Kalman gain,

- <math>\hat{\textbf{x}}_{k|k} = \hat{\textbf{x}}_{k|k-1} + K_{k}( \textbf{z}_{k} - \hat{\textbf{z}}_{k} )</math>

And the updated covariance is the predicted covariance, minus the predicted measurement covariance, weighted by the Kalman gain.

- <math>\textbf{P}_{k|k} = \textbf{P}_{k|k-1} - K_{k} \textbf{P}_{z_{k}z_{k}} K_{k}^{T} </math>

Kalman–Bucy filter

The Kalman–Bucy filter is a continuous time version of the Kalman filter.[3][4]

It is based on the state space model

- <math>\frac{d}{dt}\mathbf{x}(t) = \mathbf{F}(t)\mathbf{x}(t) + \mathbf{w}(t)</math>

- <math>\mathbf{z}(t) = \mathbf{H}(t) \mathbf{x}(t) + \mathbf{v}(t)</math>

where the covariances of the noise terms <math>\mathbf{w}(t)</math> and <math>\mathbf{v}(t)</math> are given by <math>\mathbf{Q}(t)</math> and <math>\mathbf{R}(t)</math>, respectively.

The filter consists of two differential equations, one for the state estimate and one for the covariance:

- <math>\frac{d}{dt}\hat{\mathbf{x}}(t) = \mathbf{F}(t)\hat{\mathbf{x}}(t) + \mathbf{K}(t) (\mathbf{z}(t)-\mathbf{H}(t)\hat{\mathbf{x}}(t))</math>

- <math>\frac{d}{dt}\mathbf{P}(t) = \mathbf{F}(t)\mathbf{P}(t) + \mathbf{P}(t)\mathbf{F}^{T}(t) + \mathbf{Q}(t) - \mathbf{K}(t)\mathbf{R}(t)\mathbf{K}^{T}(t)</math>

where the Kalman gain is given by

- <math>\mathbf{K}(t)=\mathbf{P}(t)\mathbf{H}^{T}(t)\mathbf{R}^{-1}(t)</math>

Note that in this expression for <math>\mathbf{K}(t)</math> the covariance of the observation noise <math>\mathbf{R}(t)</math> represents at the same time the covariance of the prediction error (or innovation) <math>\tilde{\mathbf{y}}(t)=\mathbf{z}(t)-\mathbf{H}(t)\hat{\mathbf{x}}(t)</math>; these covariances are equal only in the case of continuous time.[5]

The distinction between the prediction and update steps of discrete-time Kalman filtering does not exist in continuous time.

The second differential equation, for the covariance, is an example of a Riccati equation.

- ↑ Roweis, S. and Ghahramani, Z., A unifying review of linear Gaussian models, Neural Comput. Vol. 11, No. 2, (February 1999), pp. 305-345.

- ↑ 1 2 Шаблон:Cite journal

- ↑ Bucy, R.S. and Joseph, P.D., Filtering for Stochastic Processes with Applications to Guidance, John Wiley & Sons, 1968; 2nd Edition, AMS Chelsea Publ., 2005. ISBN 0821837826

- ↑ Jazwinski, Andrew H., Stochastic processes and filtering theory, Academic Press, New York, 1970. ISBN 0123815509

- ↑ Kailath, Thomas, "An innovation approach to least-squares estimation Part I: Linear filtering in additive white noise", IEEE Transactions on Automatic Control, 13(6), 646-655, 1968